前言#

为了预习大三课程,想提前学习下 PyTorch。

于是我遇到了神仙学习教程《动手学深度学习》,同时以此为参考完成了环境配置,感谢大佬们无私奉献 Thanks♪(・ω・)ノ

本教程展示了独显 windows 电脑用 WSL Ubuntu 子系统跑 PyTorch 深度学习的环境配置,至于为什么用 WSL 嘛。因为自己测试一番发现性能比 win 强很多。篇幅有限,省略 WSL 安装过程。

安装#

设备:#

Windows11: WSL-Ubuntu-22.04

1660ti-6g 独显

Miniconda 安装#

Miniconda 官网 wget 方式配合链接下载对应 Linux 版本

wget https://repo.anaconda.com/miniconda/Miniconda3-py310_23.3.1-0-Linux-x86_64.sh

sh 指令默认安装

sh Miniconda3-py310_23.3.1-0-Linux-x86_64.sh -b

初始化环境

~/miniconda3/bin/conda init

提示关闭该 Terminal,重新打开一个。

创建新环境,名称 d2l 可更改。

conda create --name d2l python=3.9 -y

启动环境

conda activate d2l

注意每次运行都要运行此指令,切换到 d2l 环境。

如要退出当前环境:conda deactivate。

如要完整删除名为dal的环境:conda remove -n d2l --all

CUDA 安装#

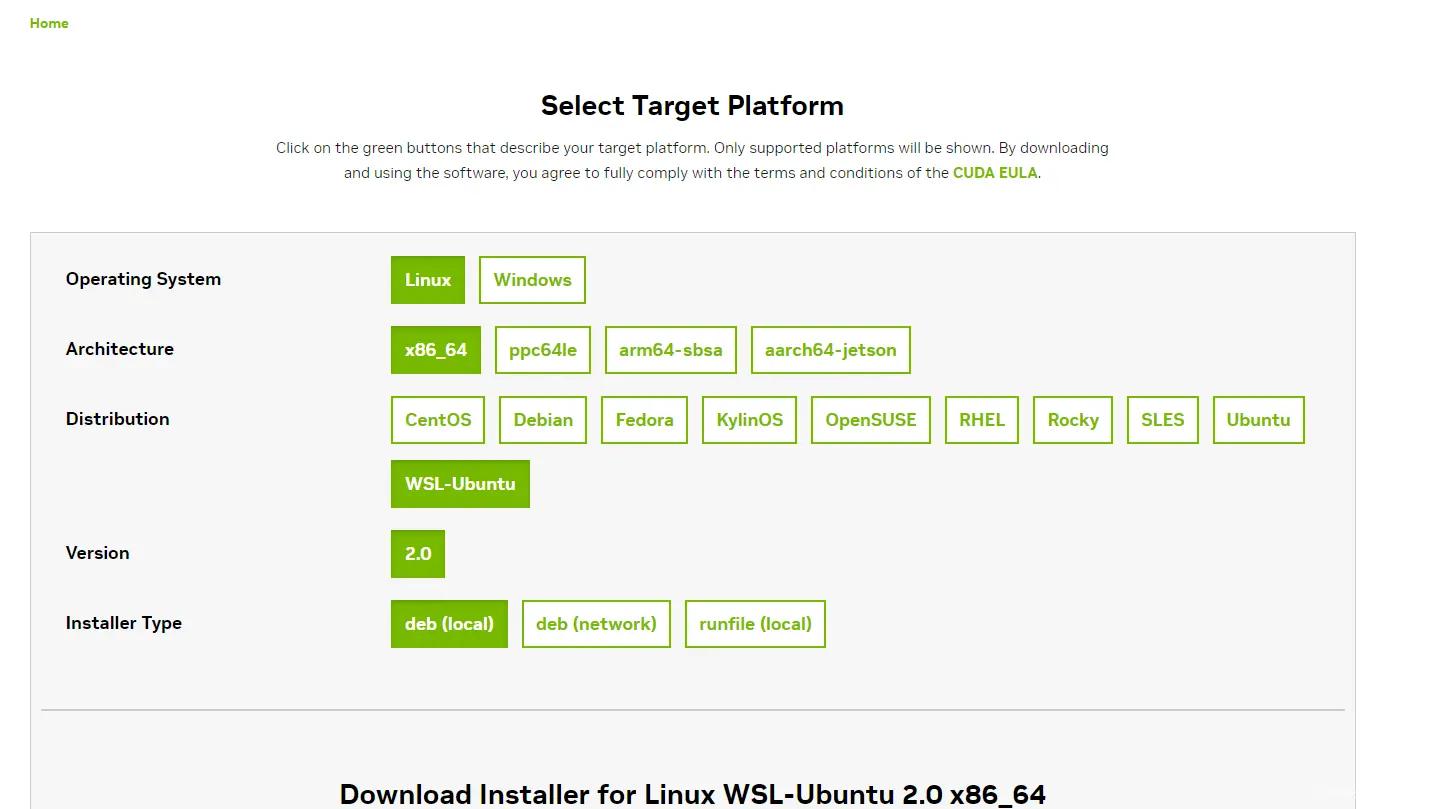

CUDA(官网)是英伟达官方的深度学习工具包,如图是 WSL 的选项。运行下载代码。

wget https://developer.download.nvidia.com/compute/cuda/repos/wsl-ubuntu/x86_64/cuda-wsl-ubuntu.pin

sudo mv cuda-wsl-ubuntu.pin /etc/apt/preferences.d/cuda-repository-pin-600

wget https://developer.download.nvidia.com/compute/cuda/12.1.1/local_installers/cuda-repo-wsl-ubuntu-12-1-local_12.1.1-1_amd64.deb

sudo dpkg -i cuda-repo-wsl-ubuntu-12-1-local_12.1.1-1_amd64.deb

sudo cp /var/cuda-repo-wsl-ubuntu-12-1-local/cuda-*-keyring.gpg /usr/share/keyrings/

sudo apt-get update

sudo apt-get -y install cuda

添加完成后需更新~/.bashrc文件

sudo vi ~/.bashrc

i进入 insert 模式,添加以下代码到文件最后,注意修改为对应版本。

export PATH=/usr/local/cuda-12.1/bin${PATH:+:${PATH}}

export LD_LIBRARY_PATH=/usr/local/cuda-12.1/lib64\

${LD_LIBRARY_PATH:+:${LD_LIBRARY_PATH}}

Esc, :wq,回车保存。

source ~/.bashrc

运行以下代码,输出如图则 CUDA 安装成功。

nvcc -V

安装 PyTorch 框架#

GPU 版本的需要在PyTorch 官网选择,我下的是 Preview 版本的刚好适配 CUDA12.1 如图,不过向下兼容性也不错。

conda install pytorch torchvision torchaudio pytorch-cuda=12.1 -c pytorch-nightly -c nvidia

下载 d2l 包

pip install d2l==0.17.6

显卡测试#

克隆测试文件

git clone https://github.com/pytorch/examples.git

cd example_pytorch/mnist/

替换main.py文件内容为以下:

from __future__ import print_function

import argparse

import torch

import time

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

from torchvision import datasets, transforms

from torch.optim.lr_scheduler import StepLR

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = nn.Conv2d(1, 32, 3, 1)

self.conv2 = nn.Conv2d(32, 64, 3, 1)

self.dropout1 = nn.Dropout(0.25)

self.dropout2 = nn.Dropout(0.5)

self.fc1 = nn.Linear(9216, 128)

self.fc2 = nn.Linear(128, 10)

def forward(self, x):

x = self.conv1(x)

x = F.relu(x)

x = self.conv2(x)

x = F.relu(x)

x = F.max_pool2d(x, 2)

x = self.dropout1(x)

x = torch.flatten(x, 1)

x = self.fc1(x)

x = F.relu(x)

x = self.dropout2(x)

x = self.fc2(x)

output = F.log_softmax(x, dim=1)

return output

def train(args, model, device, train_loader, optimizer, epoch):

model.train()

for batch_idx, (data, target) in enumerate(train_loader):

data, target = data.to(device), target.to(device)

optimizer.zero_grad()

output = model(data)

loss = F.nll_loss(output, target)

loss.backward()

optimizer.step()

if batch_idx % args.log_interval == 0:

print('Train Epoch: {} [{}/{} ({:.0f}%)]\tLoss: {:.6f}'.format(

epoch, batch_idx * len(data), len(train_loader.dataset),

100. * batch_idx / len(train_loader), loss.item()))

if args.dry_run:

break

def test(model, device, test_loader):

model.eval()

test_loss = 0

correct = 0

with torch.no_grad():

for data, target in test_loader:

data, target = data.to(device), target.to(device)

output = model(data)

test_loss += F.nll_loss(output, target, reduction='sum').item() # sum up batch loss

pred = output.argmax(dim=1, keepdim=True) # get the index of the max log-probability

correct += pred.eq(target.view_as(pred)).sum().item()

test_loss /= len(test_loader.dataset)

print('\nTest set: Average loss: {:.4f}, Accuracy: {}/{} ({:.0f}%)\n'.format(

test_loss, correct, len(test_loader.dataset),

100. * correct / len(test_loader.dataset)))

def main():

# Training settings

parser = argparse.ArgumentParser(description='PyTorch MNIST Example')

parser.add_argument('--batch-size', type=int, default=64, metavar='N',

help='input batch size for training (default: 64)')

parser.add_argument('--test-batch-size', type=int, default=1000, metavar='N',

help='input batch size for testing (default: 1000)')

parser.add_argument('--epochs', type=int, default=14, metavar='N',

help='number of epochs to train (default: 14)')

parser.add_argument('--lr', type=float, default=1.0, metavar='LR',

help='learning rate (default: 1.0)')

parser.add_argument('--gamma', type=float, default=0.7, metavar='M',

help='Learning rate step gamma (default: 0.7)')

parser.add_argument('--no-cuda', action='store_true', default=False,

help='disables CUDA training')

parser.add_argument('--no-mps', action='store_true', default=False,

help='disables macOS GPU training')

parser.add_argument('--dry-run', action='store_true', default=False,

help='quickly check a single pass')

parser.add_argument('--seed', type=int, default=1, metavar='S',

help='random seed (default: 1)')

parser.add_argument('--log-interval', type=int, default=10, metavar='N',

help='how many batches to wait before logging training status')

parser.add_argument('--save-model', action='store_true', default=False,

help='For Saving the current Model')

args = parser.parse_args()

use_cuda = not args.no_cuda and torch.cuda.is_available()

use_mps = not args.no_mps and torch.backends.mps.is_available()

torch.manual_seed(args.seed)

if use_cuda:

device = torch.device("cuda")

elif use_mps:

device = torch.device("mps")

else:

device = torch.device("cpu")

train_kwargs = {'batch_size': args.batch_size}

test_kwargs = {'batch_size': args.test_batch_size}

if use_cuda:

cuda_kwargs = {'num_workers': 5, #线程数

'pin_memory': True,

'shuffle': True}

train_kwargs.update(cuda_kwargs)

test_kwargs.update(cuda_kwargs)

transform=transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307,), (0.3081,))

])

dataset1 = datasets.MNIST('../data', train=True, download=True,

transform=transform)

dataset2 = datasets.MNIST('../data', train=False,

transform=transform)

train_loader = torch.utils.data.DataLoader(dataset1,**train_kwargs)

test_loader = torch.utils.data.DataLoader(dataset2, **test_kwargs)

model = Net().to(device)

optimizer = optim.Adadelta(model.parameters(), lr=args.lr)

scheduler = StepLR(optimizer, step_size=1, gamma=args.gamma)

start_time = time.time()

for epoch in range(1, args.epochs + 1):

train(args, model, device, train_loader, optimizer, epoch)

test(model, device, test_loader)

scheduler.step()

end_time = time.time()

total_time = end_time - start_time

print(f"Total time: {total_time:.2f} seconds.")

if args.save_model:

torch.save(model.state_dict(), "mnist_cnn.pt")

if __name__ == '__main__':

main()

此处采用 5 线程,可自行修改。

运行对应模式测速,根据配置可能 cpu 和 gpu 运行代码刚好相反。

$ python main.py #cpu模式

$ CUDA_VISIBLE_DEVICES=2 python main.py #gpu模式

测试代码来源及参考:

pytorch/examples - github

深度学习:Windows11 VS WSL2 VS Ubuntu 性能对比,pytorch2.0 性能测试!

完成!#

至此环境配置完毕,我继续跟随《动手学深度学习》了。

mkdir d2l-zh && cd d2l-zh

curl https://zh-v2.d2l.ai/d2l-zh-2.0.0.zip -o d2l-zh.zip

unzip d2l-zh.zip && rm d2l-zh.zip

cd pytorch

。。。。。